| |

|

The map

|

| |

|

Five amazing use cases shipped in public this fortnight, and which tool wins for each.

|

| |

|

1. Image generation

|

| |

|

Verdict: ChatGPT 5.5 (Images 2.0), outright.

|

| |

|

| |

|

Look at this Where's Wally 3D scene someone posted this week. Hundreds of distinct characters, fine detail across the whole frame, consistent illustration style, no melted typography. That's the proof of what Images 2.0 actually does.

|

| |

|

Claude makes no raster images natively, so the comparison ends here. What's actually new: text rendered correctly inside images, and 8-image sets with consistent characters. You probably won't recreate Where's Wally on the first try, so start smaller. Copy-paste this:

|

| |

|

Editorial illustration, 16:9, calm slate-blue and warm-cream palette. Render the text "Week of April 27" as a clean serif headline in the upper left, dark slate on cream. Below, a small abstract motif suggesting reflection: a calm wave receding, or a ribbon curling. No people.

|

|

| |

|

Cost: 30 seconds. Saved you a Figma round trip.

|

| |

|

Watch out: Images 2.0 is reliable on headlines and headers, still inconsistent on tiny body text under 12 pixels. Don't ask it to render fake dashboard UIs.

|

|

| |

|

|

| |

|

2. Design language for an app

|

| |

|

Verdict: ChatGPT 5.5.

|

| |

|

| |

|

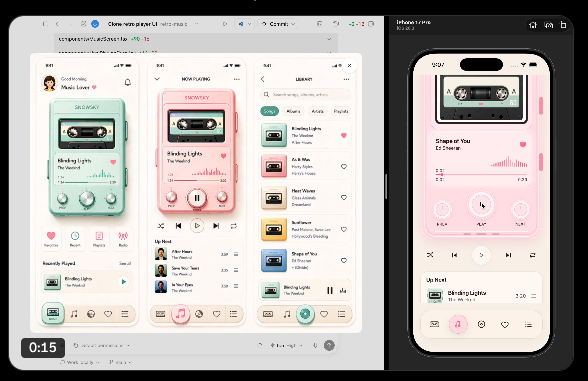

Alex Finn ran a clean demo this week: paste a screenshot of an app you like, ask ChatGPT 5.5 to extract its design language (color palette, typography, spacing, component vocabulary), and get back a structured spec you can hand to Codex, Claude Design, or a human designer.

|

| |

|

The use case: you're building something new and want it to feel like Linear, Notion, or your favorite indie app. Skip the "what fonts do they use" detective work. ChatGPT pulls it from one screenshot.

|

| |

|

Pro tip: Feed it 2 screenshots, not 1. Hero screen plus a settings page. The contrast helps the model generalize the system instead of describing the literal screen.

|

|

| |

|

|

| |

|

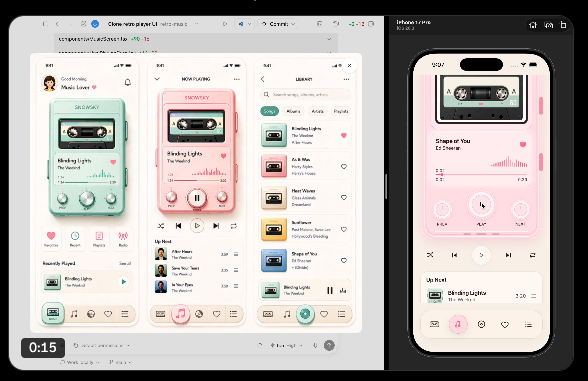

3. A music app, designed and natively built in React

|

| |

|

Verdict: ChatGPT 5.5 (Canvas + Codex).

|

| |

|

| |

|

thebuggeddev shipped a working music player this week, designed and coded by Codex inside Canvas. Native React, real audio, real animations, real interactions. The kind of thing that used to take a developer a week.

|

| |

|

The use case: prototypes that feel real, not Figma wireframes. If you've been pitching app ideas in mockup form, Codex now lets you ship a working version of the same idea in under an hour. Better demos, better feedback, better internal buy-in.

|

| |

|

Watch out: The apps Codex generates work as prototypes, not production code. The output is real React, but you'll want a developer to review it before you put real users on it.

|

|

| |

|

|

| |

|

4. Long-form writing in your voice

|

| |

|

Verdict: Claude (Opus 4.7).

|

| |

|

I tested this newsletter in both this weekend: a 1200-word Beyond the Buzz issue written in my voice, with my voice file loaded as context. Opus 4.7 nailed the rhythm on the second pass. ChatGPT 5.5 produced a clean draft, but the cadence was generic and the AI tells were back. If you've spent time training a voice file, that work is portable to ChatGPT, but you'll spend longer correcting tone than you would have spent writing it yourself.

|

| |

|

|

| |

|

5. Build a shareable web app from a prompt

|

| |

|

Verdict: Tied. Picks differ by what you want next.

|

| |

|

ChatGPT Canvas+Codex if you want a share link in 20 minutes that someone can open and use immediately (the music app in Use case 3 is the proof). Claude Design+Code if you want the design to look right out of the gate and you want a clean handoff to an engineer. I would used Canvas for prototypes I'll throw away. I will still use use Claude for prototypes I think might survive.

|

| |